Walk into any modern Security Operations Centre and you will notice something different from even two years ago. The rows of analysts manually copying alert data between tabs, writing investigation notes in spreadsheets, and triaging the same categories of alert for the hundredth time that week — that scene is disappearing. In its place, an AI security operations analyst handles the investigative groundwork autonomously, while the human team focuses on the complex, ambiguous, high-stakes work that actually requires human judgment.

For enterprises with large SOC teams, this shift means efficiency gains and better analyst retention. For small businesses — the ones that could never afford a SOC team in the first place — it means something far more significant. An AI security operations analyst makes genuine, continuous security monitoring accessible at a price point and complexity level that finally makes sense for organisations with 10, 50, or 200 employees.

This article explores the operational reality of AI in security operations: what the AI security operations analyst role actually involves, how it integrates into real-world security workflows, what it handles versus what still requires a human, and how small businesses can adopt this capability without needing to become security experts themselves.

The Operational Problem the AI Security Operations Analyst Solves

To understand why the AI security operations analyst has become essential, you need to understand the operational reality of security monitoring in 2026.

Every business with any security tooling — antivirus, a firewall, email filtering, Microsoft 365 with its built-in security features — generates alerts. Each tool watches for suspicious activity and raises a flag when it spots something. In theory, these alerts should be investigated promptly: is this a genuine threat, or is it a false positive? In practice, the volume is paralysing.

A typical small business with 50 endpoints, Microsoft 365, and a managed firewall can generate hundreds of alerts per week. A medium-sized business with EDR, a SIEM, cloud security monitoring, and email filtering can generate thousands. Each alert needs context before anyone can decide whether it matters. That context requires querying multiple systems — checking whether the user account is legitimate, whether the IP address is known-malicious, whether the file hash matches known malware, whether the behaviour pattern is normal for that user at that time of day.

For a traditional human analyst, each investigation takes 20 to 60 minutes. Multiply that by hundreds or thousands of alerts, and the maths collapses immediately. Even well-staffed enterprise SOCs investigate only a fraction of their alerts. For small businesses without any dedicated security staff, the alerts simply pile up unseen — a mounting backlog of potential threats that nobody has time to look at.

This is the problem an AI security operations analyst solves. It processes every alert, investigates each one autonomously by querying the same tools a human analyst would use, correlates evidence across multiple data sources, and delivers a verdict — all in under ten minutes per alert, around the clock, without breaks, backlogs, or fatigue. If you are unfamiliar with how a Security Operations Centre functions, our explainer on what a SOC is and why your business needs one provides the essential background.

What an AI Security Operations Analyst Actually Does

The term AI security operations analyst describes a specific set of operational capabilities — not a vague promise of "AI-powered security." Here is what the role involves in concrete, operational terms.

Continuous Alert Triage

Triage is the process of assessing every incoming alert to determine its severity, relevance, and urgency. In a human-staffed SOC, this is Tier 1 work: the analyst reads the alert, decides whether it warrants investigation, and either closes it as a false positive or escalates it for deeper analysis.

An AI security operations analyst performs this triage continuously and at scale. It classifies each alert based on its type, source, affected asset, and initial indicators, then assigns a priority level. Critically, it does not simply apply static rules — modern agentic AI systems use contextual reasoning to assess whether an alert is significant given the specific environment. A login from an unusual country might be routine for a business with international staff but deeply suspicious for a business that operates only in the United Kingdom.

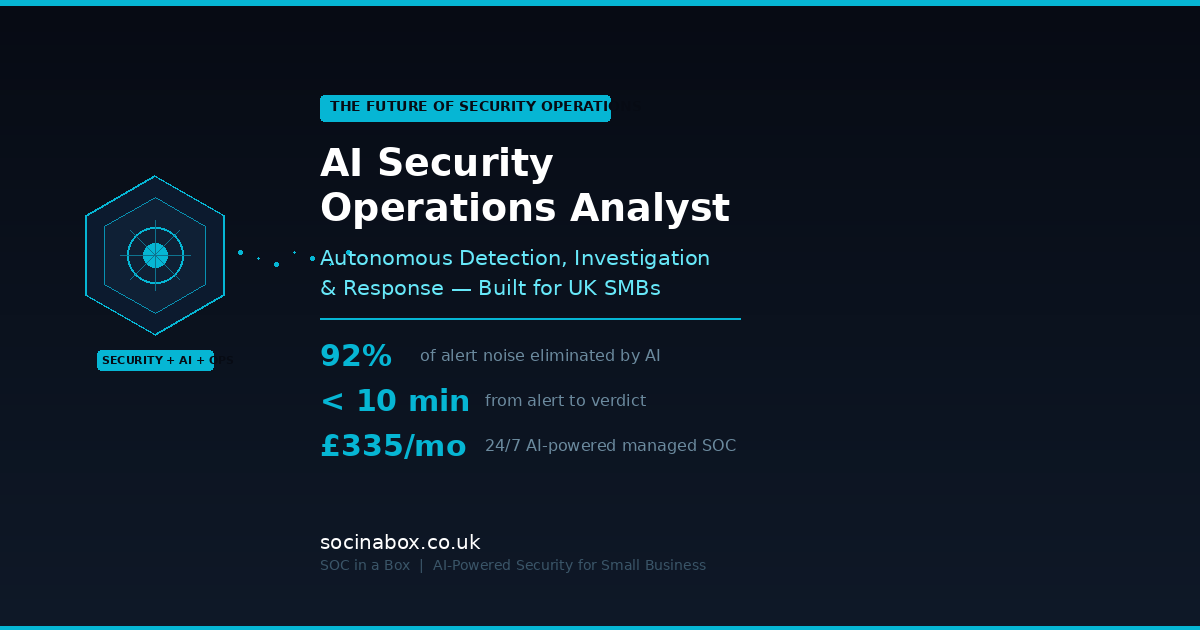

Our own EmilyAI engine, which has been in production for eight years, eliminates 92% of alert noise through this kind of contextual triage — ensuring that human analysts only see alerts that genuinely warrant their attention. Our detailed article on how EmilyAI works as a triage layer explains the mechanics of how this noise reduction operates in practice.

Autonomous Multi-Source Investigation

This is where the AI security operations analyst demonstrates capabilities that older automation simply cannot match. When an alert passes initial triage and warrants investigation, the AI does not follow a fixed script. It plans an investigation dynamically, choosing which tools to query, which data sources to examine, and which hypotheses to test — based on the specific characteristics of the alert.

For example, consider an alert triggered by a user downloading a suspicious file. A traditional automated playbook might check the file hash against a known-malware database and stop there. An AI security operations analyst goes further. It checks the file hash, but also examines the download source, reviews the user's recent activity for other anomalies, inspects the endpoint for signs of execution or persistence, queries email logs to see whether the user received a phishing message containing the link, and checks whether other users in the organisation received similar messages. If the file is unknown, it analyses the file's behaviour characteristics against patterns associated with known malware families.

This multi-source, adaptive investigation replicates what a skilled Tier 2 or Tier 3 human analyst would do — but completes it in minutes rather than hours, and performs it for every alert rather than for the handful a human team has capacity to investigate each day.

Evidence Correlation Across the Kill Chain

Modern cyber attacks rarely consist of a single event. A phishing email leads to credential theft, which leads to a suspicious login, which leads to lateral movement, which leads to data exfiltration. Each step might generate its own alert in a different security tool. A human analyst looking at any single alert sees only one piece of the puzzle. Connecting the pieces requires querying multiple systems and recognising patterns across time and across tools — work that is time-consuming and error-prone when done manually.

An AI security operations analyst excels at this correlation. Because it has access to data from across your entire security stack simultaneously, it can identify connections between events that would take a human analyst hours to piece together. A suspicious login alone might not be alarming. But a suspicious login, followed by the creation of a mail forwarding rule, followed by access to a SharePoint folder containing financial data, followed by an outbound data transfer to an unfamiliar cloud storage service — that chain of events tells a clear story, and the AI identifies it as a coherent attack rather than four unrelated alerts.

Transparent Verdict and Reporting

Every investigation conducted by an AI security operations analyst produces a structured report documenting the alert, the investigation steps taken, the evidence gathered, and the reasoning behind the verdict. This transparency is not optional — it is fundamental to trust, compliance, and operational accountability.

For alerts resolved as benign, the report provides an auditable record showing that the alert was investigated and found to be a false positive, along with the evidence supporting that conclusion. For alerts escalated as genuine threats, the report gives the human analyst or incident responder everything they need to take immediate, informed action: what happened, which systems and users are affected, the likely attack vector, a timeline of events, and recommended containment steps.

This reporting capability is particularly valuable for small businesses that need to demonstrate security diligence to regulators, insurers, or clients. The Confidence Score that SOC in a Box provides distils this operational data into a single, real-time metric that your board, insurer, and regulator can understand at a glance.

Automated and Assisted Response

Detection and investigation are only valuable if they lead to action. An AI security operations analyst can execute response actions either autonomously or with one-click human approval, depending on how the organisation's response policies are configured.

Common automated response actions include isolating a compromised endpoint from the network to prevent lateral movement, blocking a malicious IP address at the firewall, disabling a compromised user account or forcing a password reset, quarantining a suspicious email across all mailboxes in the organisation, and revoking active sessions for a user showing signs of account compromise.

The speed of these responses matters enormously. The time between an attacker gaining initial access and beginning lateral movement is often measured in hours. Every minute saved in detection and response is a minute less the attacker has to cause damage. Our analysis of data breach costs for small UK businesses makes clear just how quickly costs escalate once an incident is not contained in its early stages.

How AI Security Operations Integrates With Existing Tools

One of the most practical questions small business owners ask about the AI security operations analyst is whether it requires ripping out existing security tools and starting from scratch. The answer is no — in fact, the AI works by connecting to and leveraging the tools you already have.

An AI security operations analyst operates as an integration layer that sits on top of your existing security stack. It connects to your tools via APIs, ingesting their alerts and querying their data during investigations. This means it works with your current EDR, your current email security, your current firewall, and your current cloud platform security — it does not replace them. It makes them collectively more useful by providing the investigative intelligence that turns a collection of disconnected tools into a coherent security operation.

For most small businesses, the practical security stack that an AI security operations analyst integrates with includes Microsoft 365 (including Defender for Office 365 and Entra ID), an endpoint detection and response tool (whether Microsoft Defender for Endpoint, SentinelOne, CrowdStrike, or similar), a firewall or network security appliance, and potentially a cloud security posture management tool if you use AWS, Azure, or Google Cloud.

The SOC in a Box platform connects to all of these through the SOC365 detection engine, normalising data from different sources into a unified view and enabling EmilyAI to correlate events across your entire environment. Deployment typically takes five working days from order to live monitoring — with no disruption to your existing operations.

The Human-AI Partnership in Practice

The most effective security operations models in 2026 are not purely human or purely AI. They are partnerships where each side handles what it does best. Understanding this partnership is key to getting the most value from an AI security operations analyst.

What AI Handles Best

High-volume, repetitive triage where consistency matters more than creativity. Multi-source data correlation where speed and completeness are critical. Pattern recognition across large datasets where human attention would flag long before the analysis was complete. Round-the-clock monitoring without gaps, fatigue, or shift-change handover errors. Consistent application of investigation methodology — the AI never skips a step because it is in a hurry or because the alert looks similar to one it closed five minutes ago.

The 2026 SANS Cybersecurity Workforce Report found that AI is now expected to autonomously resolve over 90% of Tier 1 alerts, covering triage, initial enrichment, categorisation, and even some containment actions. This is not a theoretical prediction — it reflects what production AI systems are already delivering.

What Humans Handle Best

Business context that AI cannot learn from telemetry alone — understanding that a particular user's unusual behaviour is because they are travelling for a conference, not because their account is compromised. Strategic decisions about risk tolerance, response priorities, and how security policies should evolve. Communication with stakeholders during an active incident — explaining what is happening, what the business impact is, and what actions need to be taken. Complex, novel attack scenarios that do not match any pattern the AI has been trained on. Ethical and legal judgment calls about how to respond to incidents involving insider threats, law enforcement, or regulatory disclosure.

In our model, this human layer is delivered through a named, CREST-certified analyst who learns your network, your users, and your escalation preferences. They are not a faceless ticket queue — they are a dedicated security professional who understands your business context and provides the human judgment that AI cannot replicate.

Why the Partnership Outperforms Either Alone

AI without human oversight produces occasional errors that go uncorrected, eroding trust and potentially missing sophisticated threats designed to evade automated analysis. Humans without AI assistance are overwhelmed by volume, prone to fatigue-driven mistakes, and unable to maintain consistent coverage across a full 24/7 cycle. The partnership delivers comprehensive coverage (AI handles volume), accurate verdicts (humans verify edge cases), fast response (AI acts in minutes), and strategic depth (humans provide context and judgment).

This is why the industry consensus in 2026 is not "AI replaces analysts" but "AI transforms the analyst role." The AI security operations analyst handles the operational throughput. The human analyst becomes a supervisor, strategist, and threat hunter — a far more valuable and fulfilling role than manual alert triage.

Real-World Operational Scenarios

To make the AI security operations analyst concept tangible, here is how it handles three common threat scenarios that UK small businesses face daily.

Scenario 1: Business Email Compromise Attempt

A finance team member receives an email that appears to come from the managing director, requesting an urgent bank transfer. The email security gateway flags it as potentially suspicious but does not block it outright — the sender domain is slightly misspelled but the content is well-crafted.

The AI security operations analyst ingests the alert from the email gateway. It examines the sender domain and identifies the typosquat. It checks whether other users in the organisation received emails from the same domain. It reviews the finance team member's recent email activity for signs they have already interacted with the message. It queries the dark web monitoring feed for any indication that the managing director's email credentials have been compromised. Within minutes, it produces a verdict: confirmed phishing attempt with high confidence, likely business email compromise targeting the finance function. It automatically quarantines the email from all mailboxes, adds the sender domain to the block list, and escalates to the named analyst with a recommendation to alert the finance team and review recent outbound payment requests.

Without the AI security operations analyst, this alert sits in a queue. The finance team member, under pressure from what they believe is a genuine request from their managing director, processes the payment. The business loses tens of thousands of pounds. For more on how these attacks work, our guide on phishing and business email compromise explains the tactics and warning signs in detail.

Scenario 2: Ransomware Precursor Activity

An endpoint detection tool flags unusual PowerShell activity on a workstation belonging to a mid-level employee. The script appears to be enumerating network shares and attempting to discover other machines on the network.

The AI security operations analyst investigates immediately. It examines the PowerShell execution history on the endpoint, identifying that the script was launched from a temporary directory — a common indicator of malware execution. It checks the user's recent login activity and discovers a successful login from an unfamiliar IP address two hours earlier. It queries the email gateway and finds that the user clicked a link in a phishing email earlier that morning. It correlates the network discovery activity with threat intelligence feeds and identifies the pattern as consistent with the reconnaissance phase of a known ransomware group's attack methodology.

The AI isolates the endpoint from the network automatically, disables the user's account pending investigation, and escalates to the human analyst with a complete timeline: phishing email received, link clicked, credential harvested, attacker logged in remotely, malware deployed, reconnaissance begun. The human analyst coordinates the broader response — checking other endpoints for similar indicators, resetting credentials, and communicating with the business owner about the near-miss.

Without early detection, this sequence progresses to file encryption within hours. Our ransomware guide for small UK businesses documents what that progression looks like and the devastating impact it has on businesses that do not detect it early.

Scenario 3: Insider Data Exfiltration

A departing employee, two weeks from their last day, begins downloading large volumes of client data from the company's CRM system and uploading it to a personal cloud storage account.

The AI security operations analyst detects the anomaly through a combination of DLP (Data Loss Prevention) alerts and behavioural analysis. The volume and pattern of downloads significantly exceeds the user's normal activity. The destination — a personal cloud storage service — is outside the organisation's approved tools. The timing, close to a known departure date, provides additional context.

The AI flags the activity as a high-confidence insider threat, generates a detailed evidence package documenting every file accessed and every upload, and escalates to the human analyst. Critically, it does not automatically block the user — insider threat scenarios often have legal and HR dimensions that require human judgment. The human analyst consults with management and HR, and a coordinated response is planned.

Without an AI security operations analyst monitoring data flows, the departing employee walks out with the client list and the business only discovers the theft months later — if ever.

The Skills and Workforce Context

The emergence of the AI security operations analyst is inseparable from the workforce crisis facing the cyber security industry. The numbers tell a stark story.

The global cyber security workforce gap stands at approximately 4.8 million unfilled roles. In the UK, 637,000 businesses lack basic cyber security skills. The 2026 SANS Workforce Report found that 60% of organisations identify skills gaps as a greater challenge than headcount shortages, and 27% have experienced breaches directly attributable to workforce capability gaps. Entry-level SOC analyst roles have seen a 32% reduction as AI takes over routine triage work, while demand for new specialist roles — AI security engineers, AI governance analysts, ML security specialists — has nearly doubled year-over-year.

For small businesses, this workforce picture has a specific implication: you were never going to hire your way out of this problem. The talent is scarce, expensive, and in demand from organisations that can offer higher salaries and more interesting work. The AI security operations analyst, delivered through a managed service, is how small businesses access genuine security operations capability without competing for talent they cannot attract or afford.

Compliance and Governance Considerations

Deploying an AI security operations analyst — whether directly or through a managed service — raises legitimate questions about compliance, data handling, and governance that responsible businesses should address.

UK GDPR and data processing. An AI system that monitors your IT environment will process personal data — login records, email metadata, user activity logs. Ensure your provider has appropriate data processing agreements in place and that data residency meets your requirements. For UK businesses, data should ideally be processed and stored within the UK or the EU.

Auditability and explainability. Regulators, insurers, and clients may ask how your security monitoring works and what decisions are being made automatically. The AI security operations analyst must produce auditable investigation trails that can be reviewed, questioned, and justified. Black-box AI that cannot explain its reasoning is a compliance liability.

AI governance standards. The push toward ISO 42001 certification for AI systems is gaining momentum in the security industry. While not yet mandatory, organisations deploying AI in security operations should be aware of emerging governance frameworks and ensure their provider is aligned with best practices.

Cyber Essentials alignment. For UK small businesses holding or pursuing Cyber Essentials Certification, a managed SOC with an AI security operations analyst provides the continuous monitoring, logging, and incident response capability that supports and extends the scheme's five technical controls. Certification handles prevention. AI-powered operations handle detection and response.

Choosing the Right Approach for Your Business

There are three realistic paths to adopting AI security operations analyst capability, depending on your size, budget, and existing resources.

Fully managed SOC service (recommended for most SMBs). This is the most practical route for businesses under 250 employees. A managed provider deploys and operates the AI-powered detection engine, pairs it with human analysts for escalation and complex investigations, and delivers the service as a monthly subscription. You get all the benefits of an AI security operations analyst without needing to understand or manage the underlying technology. SOC in a Box follows this model — the SOC365 engine and EmilyAI handle the operational throughput, a named CREST-certified analyst provides human oversight, and you get a single invoice covering the entire operation. The savings calculator shows exactly how this compares to your current piecemeal security spend.

Co-managed model. If you have an internal IT team with some security capability, a co-managed approach works well. The AI platform handles triage and investigation, your internal team handles day-to-day response for routine items, and the managed provider's expert analysts handle complex incidents and strategic guidance. This model works well for businesses in the 100–500 employee range that have IT staff but not dedicated security specialists.

Direct platform deployment. For larger or more mature organisations with existing SOC teams, deploying an AI SOC platform directly can augment your team's capacity. This approach requires significant internal expertise to configure, tune, and manage the platform, and is generally only appropriate for businesses with dedicated security personnel. Most SMBs should avoid this route — the operational overhead negates the benefits for businesses without in-house security expertise.

Getting Started: A Practical Roadmap

If you are ready to move from theory to action, here is a practical sequence for adopting AI security operations analyst capability in your business.

Month 1: Foundations. Ensure your baseline security controls are in place. Enable MFA on every cloud service. Apply outstanding security updates. Review user access controls. If you have not already, pursue Cyber Essentials Certification — it costs from £320 and ensures the five fundamental controls are properly implemented. A well-configured baseline dramatically improves the effectiveness of any monitoring layered on top.

Month 2: Scoping and selection. Assess your current security tooling, identify what telemetry is available, and engage potential managed SOC providers for scoping calls. Ask specific questions: what data sources do you integrate with? How does your AI triage work? What does escalation look like? Who is my named analyst? How quickly can you deploy? Our deployment page walks through exactly what the process looks like with SOC in a Box — five working days from order to live 24/7 monitoring.

Month 3: Deployment and tuning. Go live with monitoring. The first few weeks will involve tuning — the AI and your named analyst will learn the patterns of normal activity in your environment, reducing false positives and calibrating detection sensitivity. Expect to engage more actively during this period, providing context about expected user behaviours, approved applications, and known exceptions.

Month 4 onwards: Operational maturity. With tuning complete, the service settles into steady-state operation. Review monthly reports, act on recommendations from your named analyst, and use the visibility your new monitoring provides to continuously improve your security posture. The AI security operations analyst handles the operational throughput. You focus on running your business.

Conclusion: Security Operations Is No Longer Out of Reach

For too long, genuine security operations — continuous monitoring, expert investigation, rapid response — has been a capability reserved for large organisations with large budgets. The AI security operations analyst changes that equation decisively.

It investigates every alert, around the clock, at a speed and consistency that human-only teams cannot match. It correlates evidence across your entire environment, identifying complex attack chains that would take hours to piece together manually. It escalates genuine threats with complete investigation reports, enabling human experts to take immediate, informed action. And it does all of this at a cost that small businesses can genuinely afford.

The UK's National Cyber Security Centre is clear: cyber attacks are a question of when, not if. The businesses that survive and thrive will be the ones that detect and respond to threats quickly, not the ones that discover them weeks or months later. An AI security operations analyst, delivered through a managed service built for businesses your size, is how you make that detection and response a reality.

Your business is too important to leave unmonitored. And in 2026, there is no longer any reason it should be.

Get AI-Powered Security Operations

SOC in a Box delivers EmilyAI — eight years in production, 92% noise reduction — paired with a named CREST-certified analyst. One box. One invoice. Five days to live monitoring. From £335/month.

See plans and pricing